Ever heard of Project Catapult?

Project Catapult is the code name for a Microsoft Research (MSR) enterprise-level initiative transforming cloud computing. Project Catapult began in 2010 when Microsoft realized that FPGAs offer a unique combination of speed, programmability, and flexibility ideal for delivering cutting-edge performance and keeping pace with rapid innovation. Five years later, Bing saw a 50% improvement in throughput by deploying FPGAs to speed up the search ranking.

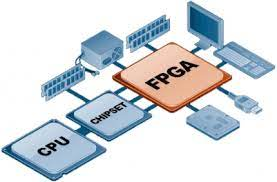

FPGAs have been around for a while, but Microsoft Research (MSR) was the first to employ them for cloud computing. MSR demonstrated that FPGAs could give performance and efficiency without the expense, difficulty, and danger of creating unique ASICs. Now this leaves us wondering what FPGAs are?

FPGA stands for Field Programmable Gate Array. An FPGA can be configured to be almost any digital circuit you desire, but the magic in this situation is that nothing alters. The FPGA needs to be loaded with a configuration to begin functioning like the desired circuit. No bother, no jumper wires, and no soldering.

How does an FPGA function?

There are a vast number of programmable logic blocks (PLBs) that may be programmed and interconnected to form several digital circuits that make up an FPGA. With an HDL, you design the digital circuit you want. The synthesis tool transforms your HDL into a gate-level representation of the circuit. The place-and-route tool changes this representation into actual configuration data for your specific FPGA.

Why Do Developers Select FPGA?

FPGAs are incredibly adaptable. Before tape out, designers created prototypes using FPGAs to advance the design gradually. FPGAs are frequently utilized in commercial applications, such as telecommunications and avionics, where parallel computing is required, and the requirements are dynamic.

Yet, FPGAs provide incredibly accurate timing and are far more time-efficient. For instance, the CPU may run a brief code loop while turning on an LED to read the status of one pin and then modify the state of another pin in line with that result.

If the code was optimized, you could get this to update millions of times per second, and although that may seem impressive, all you need to do is link a button to an LED with an FPGA and connect the button and the LED. The routing matrix receives the button value through a buffer, processes it, and outputs it through a buffer. This process is continuous.

If we still wish to link the button to the LED, our once-impressive reaction time of a millionth of a second has decreased to a dismal fifth of a second. To maintain the same nearly immediate response time, the button and LED are thus connected to the FPGA.

The independence of FPGAs makes them an excellent choice for regulating anything that needs precise timing.

Advantages of using FPGAs:

ML engineers can now run algorithms more quickly than with GPUs or ASICs. Bing deployed the first FPGA-accelerated Deep Neural Network (DNN). MSR demonstrated that FPGAs could enable real-time AI, beating GPUs in ultra-low latency, even without batching inference requests. FPGA use can also be pioneered in cloud computing. They can act as a local computing accelerator, an inline processor, or a remote accelerator for distributed computing. all network traffic is routed through the FPGA, which can perform line-rate computation on even high-bandwidth network flows.

For applications like digital signal processing (DSP), machine learning, and cryptocurrency mining, FPGA can be used to build digital logic that is optimized. You can frequently implement complete processors using the digital logic of an FPGA due to its versatility. Consumer electronics, satellites, and servers that carry out specific calculations all employ FPGAs.

Custom circuit design may be challenging, and we frequently need to consider whether there is a better way to accomplish our goal. FPGAs are incredible devices that are essential for the jobs they excel at.