The world is going through a time of global warming that has never happened before. Changes in rain and snow patterns, rising sea levels, more and stronger droughts, wildfires, storms, tornadoes, and hurricanes are all effects of global warming. These effects, which are now obvious, are becoming more important and severe every year and are likely to change our lives and the lives of our children and their children in future. Climate change is one of the biggest threats that humanity is facing today.

The greenhouse effect is the main factor contributing to the planet’s warming. Some feedback mechanisms, such as the evaporation of water from the oceans and the loss of albedo effect on polar ice sheets, make the situation worse, leading to more global warming and possibly, in the not too distant future, an uncontrolled global warming disaster. In this article, we discuss the causes of climate change (mainly greenhouse effect) and some of the impacts of climate change.

Greenhouse Effect

The greenhouse effect was first thought of by Joseph Fourier in the 1820s. He thought that something in the earth’s atmosphere controlled the temperature at the surface of the earth. He was investigating the origins of historic glaciers and the ice sheets that once used to cover most of Europe. Decades later, Tyndall took Fourier’s idea and used an experiment set up by Macedonio Melloni to show that CO2 could absorb a lot more heat than other gases. This supported Fourier’s idea and showed that CO2 was the part of the atmosphere that Fourier was looking for. Many researchers tried to measure CO2 and warn the world about the increasing concentrations of CO2, but it was only in the 1960s, when C.D. Keeling measured the amount of CO2 in the atmosphere and found that it was rising quickly, and that anthropogenic activities were to blame for it.

The greenhouse effect of water vapour is significantly greater than that of carbon dioxide. Also, the amount of water vapour in the air is about a hundred times higher than the amount of CO2, as a result water is responsible for more than 60% of the global warming effect. The temperature determines how much water vapour is in the air. When the amount of CO2 in the air goes up, the global temperature goes up by only a small amount, but that’s enough to cause more water vapour to be released from the oceans and get into the air. The biggest impact on the world’s temperature comes from this feedback mechanism. Interestingly, the amount of water vapour in the atmosphere is controlled by the concentration of CO2, which in turn determines the global average surface temperature. In fact, if there was no CO2 in the air, the planet’s surface temperature would be about 33°C lower than it is now.

The sun radiates energy on the earth with wavelengths that range from 0.3 to 5 μm. There is a lot of energy coming from the sun. It heats the atmosphere we breathe in and everything on Earth. At night, a lot of this heat energy is sent back into space, but at different wavelengths, which are in the infrared range from 4 to 50 μm. According to Planck’s Law of blackbody radiation, the temperature of a body affects the frequency of the heat it emits. When this energy leaves the Earth, it heats the molecules of greenhouse gases (like H2O, CO2, CH4, etc.) in the air. Let’s understand this using CO2 and H2O as examples. This heating process happens because the radiated Infrared frequency is in sync (resonates) with the natural frequency of the carbon-oxygen bond of CO2 (4.26 m is the asymmetric stretching vibration mode and 14.99 m is the bending vibration mode) and the oxygen-hydrogen bond of H2O. The CO2 and H2O molecules are heated because their bond vibrations are increased. When these molecules heat up, they transfer their energy to the other molecules in the atmosphere (N2, O2), maintaining a consistent temperature on Earth. The O-O bond in oxygen molecules and the N-N bond in nitrogen molecules both have vibrational frequencies that are different than the radiation frequencies, hence they are unaffected by the radiation that leaves Earth at night.

Global warming

There is overwhelming evidence from the scientific community that human activities are to blame for the increasing concentration of carbon dioxide (CO2) in the atmosphere, and thus for the resulting global warming. This view is shared by each and every scientific group and research organisation focusing on climate change. The current rise in global temperature has been triggered by an almost 50 percent increase in atmospheric CO2 concentration, from 280 ppm (before the industrial revolution) to 417 ppm in May 2020. Total atmospheric CO2 and it’s concentration value are the most reliable measurements of global warming we have right now. In 1960, the rate of increase of CO2 was less than 1 ppm per year. Whereas, right now it is 2.4 ppm per year.

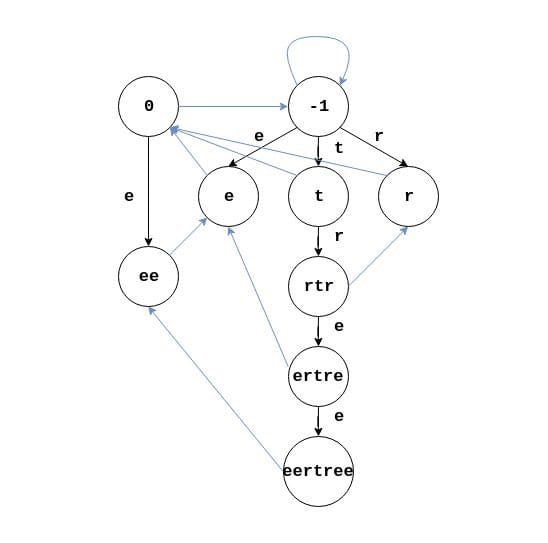

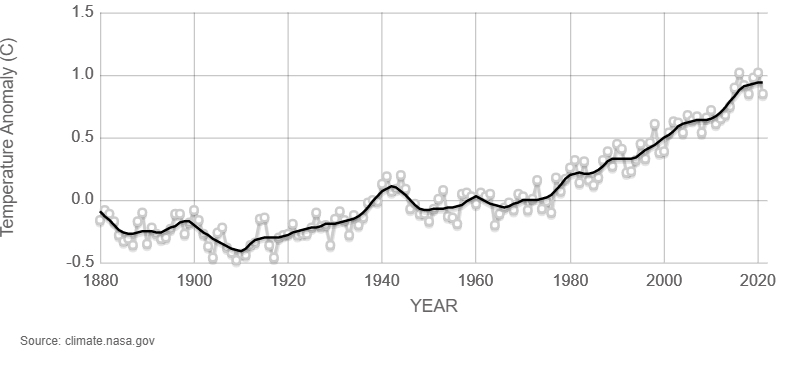

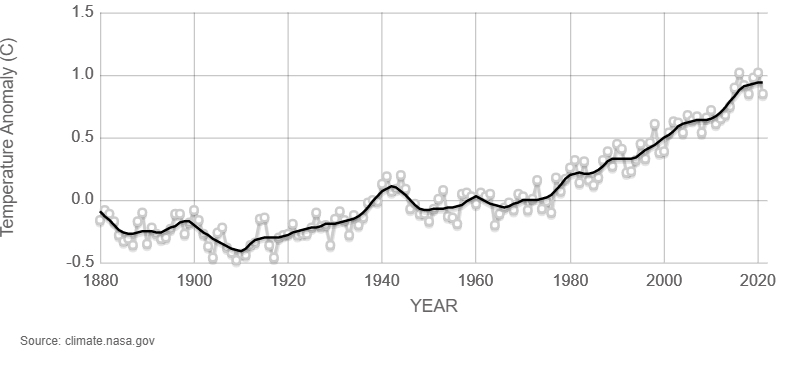

This rate of change is the best way to tell if we are making progress in stopping global warming. At the moment, there are no signs that this is happening. In fact, the opposite is true. Even if we stopped burning fossil fuels, it would take a long time for CO2 levels to go down because the lifetime of CO2 is of the order of hundreds of years in the upper atmosphere. The most convincing evidence that the rise in CO2 is the most likely cause of global warming can be seen in graphs that show how the amount of CO2 in the air and the average temperature around the world have changed over time over the past several decades (see Fig. 1, Fig. 2). Over the past 60 years, the average temperature around the world has shown a similar trend as that of CO2 levels. The Average global temperatures from 2010 to 2022 compared to a baseline average from 1951 to 1980 can be seen in (Fig. 3).

Figure 1:Carbon dioxide concentration level.

Source: NASA satellite observations.

Figure 2:Global temperature variation.

Source: NASA satellite observations.

Figure 3: Average global temperatures from 2010 to 2022 compared to a baseline average from 1951 to 1980.

Source: NASA Data

Impact of climate change

One of the most pressing challenges confronting humanity today is climate change and how to minimize the damage it causes. It’s multifaceted, therefore solving it will require expertise in many disciplines like science, economics, society, governance and ethics. Consequences from global warming will be felt for generations, if not centuries. While it will be impossible to completely stop global warming, its growth rate is within our control. As the world’s temperatures continue to rise, it will have a negative effect on the world’s economy, energy supply, environmental quality, and health.

So far, some of the effects of climate change are –

- Earth is getting warmer: As temperatures rise, days of extreme heat that used to happen once every 20 years may now happen every 2 or 3 years on average With the exception of June, 2016 was the warmest year on record from January to September (NASA, 2020c). Since 2005, 10 of the warmest years in the record-keeping period of 140 years have happened. Six of the hottest years on record occurred in the past six years (IPCC, 2018).

- Oceans get warmer: Over 90% of the warming that has happened on Earth in the past 50 years has happened in the oceans (NASA, 2020c). Rising sea levels, ocean heat waves, coral bleaching, severe storms, changes in marine ecosystem, and the melting of glaciers and ice sheets around Greenland and Antarctica are all caused by warmer oceans. The waters were warmer last year than they have ever been since measurement of ocean temperature started more than 60 years ago.

- Ice Sheets are shrinking: Between 1993 and 2016, the Greenland ice sheet lost an average of 286 billion tonnes of ice per year. During the same time period, the Antarctic ice sheet lost about 127 billion tonnes of ice per year. In the last ten years, the rate of ice mass loss in Antarctica has tripled (NASA).

- Glacial retreat: Most of the world’s glaciers are melting, including those in Africa, Alaska, the Alps, Andes, Himalayas, and the Rocky Mountains. Most of the sea level rise in the last few decades has been caused by glaciers and ice sheets melting. The melting of glaciers is a major threat to ecosystems and water supplies for people in many parts of the world.

- Sea level rise: The sea level rises when the oceans get warmer and glaciers and other ice start to melt. When the water in the ocean gets warmer, it expands. This makes the sea level rise even more. In the last 100 years, the sea level rose about 20 cm around the world. In the last two decades, the rate of growth was twice as fast as in the last century, and this rate is getting faster. Flooding is getting worse and happening more often in many places.

- Increased frequency of extreme hydrological and meteorological events: Since the middle of the last century, there have been more events with record high temperatures and heavy rainfall. Since the early 1980s, hurricanes have been getting stronger, happening more often, and lasting longer. As the oceans continue to warm, hurricane storms will get stronger and rain will fall at a faster rate.

- Oceans are getting more acidic: Since the start of the Industrial Revolution, the surface waters of the oceans have become about 30% more acidic. The cause of this increase is that humans are releasing more carbon dioxide into the atmosphere, which causes more of it to be absorbed by the oceans. Carbon dioxide is being taken up by the top layer of the oceans at a rate of about 2 billion tonnes per year.

Future Scenario

According to reports made by the Intergovernmental Panel on Climate Change (IPCC), the average global temperature is on track to rise by 3°C by the end of this century. Their goal is a maximum of 1.5°C, but reaching that goal will require “rapid, far-reaching, and unprecedented changes in all parts of society. To reach a goal of 1.5°C warming, greenhouse gas emissions will need to be cut by 45 percent below what they were in 2010 by 2030. And, as we’ve already said, even if all of these emissions stopped right now, the world’s temperature would still rise for decades because of the long lasting effects of the atmosphere and oceans. Climate change affects the quality of our environment, our food supplies, our susceptibility to diseases and other health problems, and our ability to make money. Most of these effects are being felt and will continue to be felt more in the future and sadly more by the poor than by the rich.

Causes and Effects of Climate Change

The world is going through a time of global warming that has never happened before. Changes in rain and snow patterns, rising sea levels, more and stronger droughts, wildfires, storms, tornadoes, and hurricanes are all effects of global warming. These effects, which are now obvious, are becoming more important and severe every year and are likely to change our lives and the lives of our children and their children in future. Climate change is one of the biggest threats that humanity is facing today.

The greenhouse effect is the main factor contributing to the planet’s warming. Some feedback mechanisms, such as the evaporation of water from the oceans and the loss of albedo effect on polar ice sheets, make the situation worse, leading to more global warming and possibly, in the not too distant future, an uncontrolled global warming disaster. In this article, we discuss the causes of climate change (mainly greenhouse effect) and some of the impacts of climate change.

Greenhouse Effect

The greenhouse effect was first thought of by Joseph Fourier in the 1820s. He thought that something in the earth’s atmosphere controlled the temperature at the surface of the earth. He was investigating the origins of historic glaciers and the ice sheets that once used to cover most of Europe. Decades later, Tyndall took Fourier’s idea and used an experiment set up by Macedonio Melloni to show that CO2 could absorb a lot more heat than other gases. This supported Fourier’s idea and showed that CO2 was the part of the atmosphere that Fourier was looking for. Many researchers tried to measure CO2 and warn the world about the increasing concentrations of CO2, but it was only in the 1960s, when C.D. Keeling measured the amount of CO2 in the atmosphere and found that it was rising quickly, and that anthropogenic activities were to blame for it.

The greenhouse effect of water vapour is significantly greater than that of carbon dioxide. Also, the amount of water vapour in the air is about a hundred times higher than the amount of CO2, as a result water is responsible for more than 60% of the global warming effect. The temperature determines how much water vapour is in the air. When the amount of CO2 in the air goes up, the global temperature goes up by only a small amount, but that’s enough to cause more water vapour to be released from the oceans and get into the air. The biggest impact on the world’s temperature comes from this feedback mechanism. Interestingly, the amount of water vapour in the atmosphere is controlled by the concentration of CO2, which in turn determines the global average surface temperature. In fact, if there was no CO2 in the air, the planet’s surface temperature would be about 33°C lower than it is now.

The sun radiates energy on the earth with wavelengths that range from 0.3 to 5 μm. There is a lot of energy coming from the sun. It heats the atmosphere we breathe in and everything on Earth. At night, a lot of this heat energy is sent back into space, but at different wavelengths, which are in the infrared range from 4 to 50 μm. According to Planck’s Law of blackbody radiation, the temperature of a body affects the frequency of the heat it emits. When this energy leaves the Earth, it heats the molecules of greenhouse gases (like H2O, CO2, CH4, etc.) in the air. Let’s understand this using CO2 and H2O as examples. This heating process happens because the radiated Infrared frequency is in sync (resonates) with the natural frequency of the carbon-oxygen bond of CO2 (4.26 m is the asymmetric stretching vibration mode and 14.99 m is the bending vibration mode) and the oxygen-hydrogen bond of H2O. The CO2 and H2O molecules are heated because their bond vibrations are increased. When these molecules heat up, they transfer their energy to the other molecules in the atmosphere (N2, O2), maintaining a consistent temperature on Earth. The O-O bond in oxygen molecules and the N-N bond in nitrogen molecules both have vibrational frequencies that are different than the radiation frequencies, hence they are unaffected by the radiation that leaves Earth at night.

Global warming

There is overwhelming evidence from the scientific community that human activities are to blame for the increasing concentration of carbon dioxide (CO2) in the atmosphere, and thus for the resulting global warming. This view is shared by each and every scientific group and research organisation focusing on climate change. The current rise in global temperature has been triggered by an almost 50 percent increase in atmospheric CO2 concentration, from 280 ppm (before the industrial revolution) to 417 ppm in May 2020. Total atmospheric CO2 and it’s concentration value are the most reliable measurements of global warming we have right now. In 1960, the rate of increase of CO2 was less than 1 ppm per year. Whereas, right now it is 2.4 ppm per year.

This rate of change is the best way to tell if we are making progress in stopping global warming. At the moment, there are no signs that this is happening. In fact, the opposite is true. Even if we stopped burning fossil fuels, it would take a long time for CO2 levels to go down because the lifetime of CO2 is of the order of hundreds of years in the upper atmosphere. The most convincing evidence that the rise in CO2 is the most likely cause of global warming can be seen in graphs that show how the amount of CO2 in the air and the average temperature around the world have changed over time over the past several decades (see Fig. 1, Fig. 2). Over the past 60 years, the average temperature around the world has shown a similar trend as that of CO2 levels. The Average global temperatures from 2010 to 2022 compared to a baseline average from 1951 to 1980 can be seen in (Fig. 3).

Figure 1:Carbon dioxide concentration level.

Source: NASA satellite observations.

Figure 2:Global temperature variation.

Source: NASA satellite observations.

Figure 3: Average global temperatures from 2010 to 2022 compared to a baseline average from 1951 to 1980.

Source: NASA Data

Impact of climate change

One of the most pressing challenges confronting humanity today is climate change and how to minimize the damage it causes. It’s multifaceted, therefore solving it will require expertise in many disciplines like science, economics, society, governance and ethics. Consequences from global warming will be felt for generations, if not centuries. While it will be impossible to completely stop global warming, its growth rate is within our control. As the world’s temperatures continue to rise, it will have a negative effect on the world’s economy, energy supply, environmental quality, and health.

So far, some of the effects of climate change are –

- Earth is getting warmer: As temperatures rise, days of extreme heat that used to happen once every 20 years may now happen every 2 or 3 years on average With the exception of June, 2016 was the warmest year on record from January to September (NASA, 2020c). Since 2005, 10 of the warmest years in the record-keeping period of 140 years have happened. Six of the hottest years on record occurred in the past six years (IPCC, 2018).

- Oceans get warmer: Over 90% of the warming that has happened on Earth in the past 50 years has happened in the oceans (NASA, 2020c). Rising sea levels, ocean heat waves, coral bleaching, severe storms, changes in marine ecosystem, and the melting of glaciers and ice sheets around Greenland and Antarctica are all caused by warmer oceans. The waters were warmer last year than they have ever been since measurement of ocean temperature started more than 60 years ago.

- Ice Sheets are shrinking: Between 1993 and 2016, the Greenland ice sheet lost an average of 286 billion tonnes of ice per year. During the same time period, the Antarctic ice sheet lost about 127 billion tonnes of ice per year. In the last ten years, the rate of ice mass loss in Antarctica has tripled (NASA).

- Glacial retreat: Most of the world’s glaciers are melting, including those in Africa, Alaska, the Alps, Andes, Himalayas, and the Rocky Mountains. Most of the sea level rise in the last few decades has been caused by glaciers and ice sheets melting. The melting of glaciers is a major threat to ecosystems and water supplies for people in many parts of the world.

- Sea level rise: The sea level rises when the oceans get warmer and glaciers and other ice start to melt. When the water in the ocean gets warmer, it expands. This makes the sea level rise even more. In the last 100 years, the sea level rose about 20 cm around the world. In the last two decades, the rate of growth was twice as fast as in the last century, and this rate is getting faster. Flooding is getting worse and happening more often in many places.

- Increased frequency of extreme hydrological and meteorological events: Since the middle of the last century, there have been more events with record high temperatures and heavy rainfall. Since the early 1980s, hurricanes have been getting stronger, happening more often, and lasting longer. As the oceans continue to warm, hurricane storms will get stronger and rain will fall at a faster rate.

- Oceans are getting more acidic: Since the start of the Industrial Revolution, the surface waters of the oceans have become about 30% more acidic. The cause of this increase is that humans are releasing more carbon dioxide into the atmosphere, which causes more of it to be absorbed by the oceans. Carbon dioxide is being taken up by the top layer of the oceans at a rate of about 2 billion tonnes per year.

Future Scenario

According to reports made by the Intergovernmental Panel on Climate Change (IPCC), the average global temperature is on track to rise by 3°C by the end of this century. Their goal is a maximum of 1.5°C, but reaching that goal will require “rapid, far-reaching, and unprecedented changes in all parts of society. To reach a goal of 1.5°C warming, greenhouse gas emissions will need to be cut by 45 percent below what they were in 2010 by 2030. And, as we’ve already said, even if all of these emissions stopped right now, the world’s temperature would still rise for decades because of the long lasting effects of the atmosphere and oceans. Climate change affects the quality of our environment, our food supplies, our susceptibility to diseases and other health problems, and our ability to make money. Most of these effects are being felt and will continue to be felt more in the future and sadly more by the poor than by the rich.

There are a few different types of text-to-image generators. One of them is using diffusion models.Diffusion models are trained on a large dataset of hundreds of millions of images.

A word describes each image so the model can learn the relationship between text and images. It is observed that during this training process, the model also knows other conceptual information, such as what kind of elements would make the image more clear and sharp.

After the model is trained, the models learn to take a text prompt provided by the user, create an LR(low-resolution) image, and then gradually add new details to turn it into a complete image. The same process is repeated until the HR(high-resolution) image is produced.

Green dragon on table

Diffusion models don’t just modify the existing images; they generate everything from scratch without referencing any images available online. It means that if you ask them to generate an image of a “dragon on the table,” they would not find an image of the dragon and table individually on the internet and then process further to put the dragon on the table instead of that they will create the image entirely from scratch based on their understanding of the texts during the training time.

There are a few different types of text-to-image generators. One of them is using diffusion models.Diffusion models are trained on a large dataset of hundreds of millions of images.

A word describes each image so the model can learn the relationship between text and images. It is observed that during this training process, the model also knows other conceptual information, such as what kind of elements would make the image more clear and sharp.

After the model is trained, the models learn to take a text prompt provided by the user, create an LR(low-resolution) image, and then gradually add new details to turn it into a complete image. The same process is repeated until the HR(high-resolution) image is produced.

Green dragon on table

Diffusion models don’t just modify the existing images; they generate everything from scratch without referencing any images available online. It means that if you ask them to generate an image of a “dragon on the table,” they would not find an image of the dragon and table individually on the internet and then process further to put the dragon on the table instead of that they will create the image entirely from scratch based on their understanding of the texts during the training time.

Sloth in pink water

There are many benefits of using diffusion models over other models. Firstly, these are more efficient to train. The images generated by them are more realistic and connected to the world. Also, it makes it easier to control the generated image, you can just use the color of the dragon(let’s say green dragon) in the text prompt, and the models will generate the image.

Sloth in pink water

There are many benefits of using diffusion models over other models. Firstly, these are more efficient to train. The images generated by them are more realistic and connected to the world. Also, it makes it easier to control the generated image, you can just use the color of the dragon(let’s say green dragon) in the text prompt, and the models will generate the image.