In the very first Wisdom Week – LUMIÈRES conducted by CEV, Dr. Jignesh N Sarvaiya of Electronics Engineering Department gave the students some really interesting insights into Digital Image processing. Here is a brief summary of the topics covered by him.

What is a digital image?

A digital image is a representation of a two-dimensional image as a finite set of digital values, called picture elements or pixels. Pixel values typically represent gray levels, colours , heights, opacities etc. Digitization implies that a digital image is an approximation of a real scene. Common image formats include black and white images, grayscale images and RGB images.

What is Digital Image Processing (DIP)?

Digital Image Processing means processing digital image by means of a digital computer. It uses computer algorithms, in order to get enhanced image to extract some useful information.

The continuum from image processing to computer vision can be broken up into low-, mid- and high-level processes which are explained below.

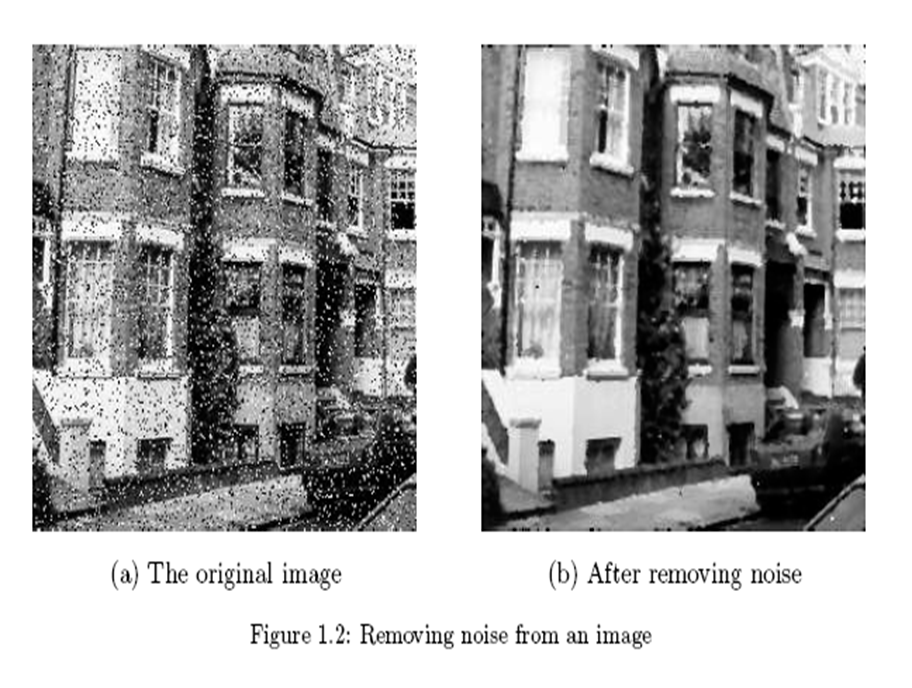

Low Level Process: where the input as well as the output is an image. Examples include noise removal and image sharpening.

Mid Level Process: where the input is an image and output is attribute. Examples include object recognition and segmentation.

High Level Process: where the input is attribute and output is understanding. Examples include scene understanding and autonomous navigation.

Representing Digital Images

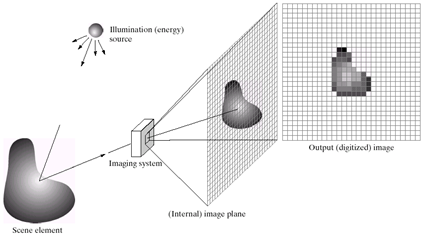

An image may be defined as a two-dimensional f(x,y), where x and y are spatial coordinates and the amplitude of f at any pair of coordinates (x,y) is called the intensity of the image at that point.

A digital image can be represented as a M x N numerical array. The discrete intensity interval is [0, L-1] where L=2k

The number of bits (b) required to store M × N digitized image is given by b = M × N × k.

Why do we need DIP?

Image processing is a subclass of signal processing concerned specifically with pictures which improves image quality for human perception and/or computer interpretation.

It is motivated by major applications such as improvement of pictorial information for human perception, image processing for autonomous machine applications, efficient storage and transmission.

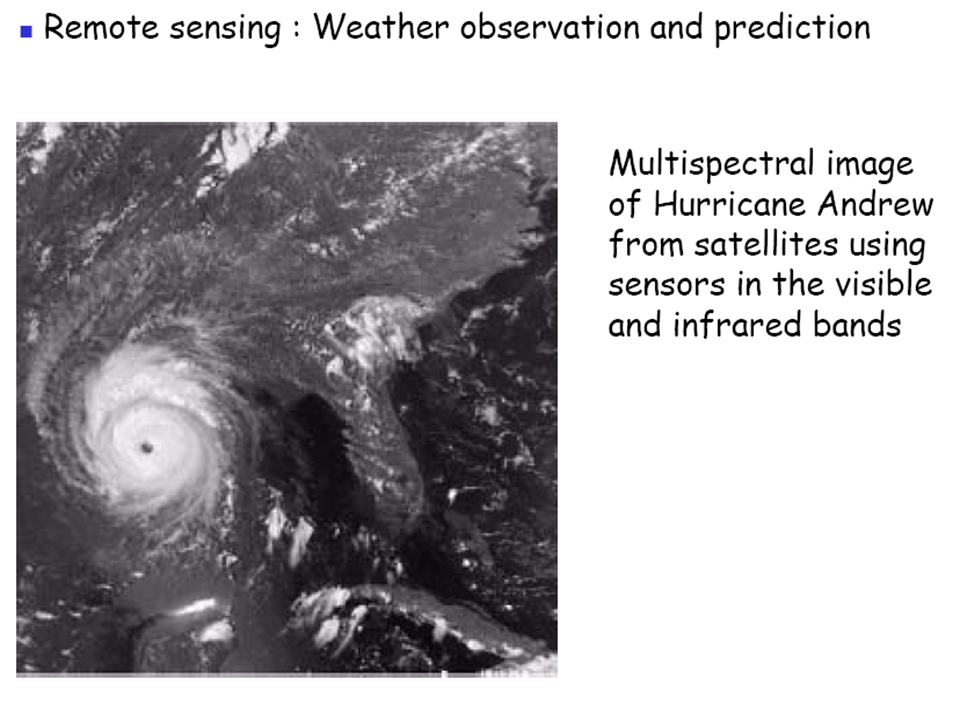

DIP employs methods capable of enhancing information for human interpretation and analysis by noise filtering, content enhancement, contrast enhancement, deblurring, remote sensing etc.

Fields Using DIP

-

- Radiation from the electromagnetic spectrum

- Acoustic

- Ultrasonic

- Electronic in the form of electron beams used in electron microscopy

- Computer synthetic images used for modelling and visualisation

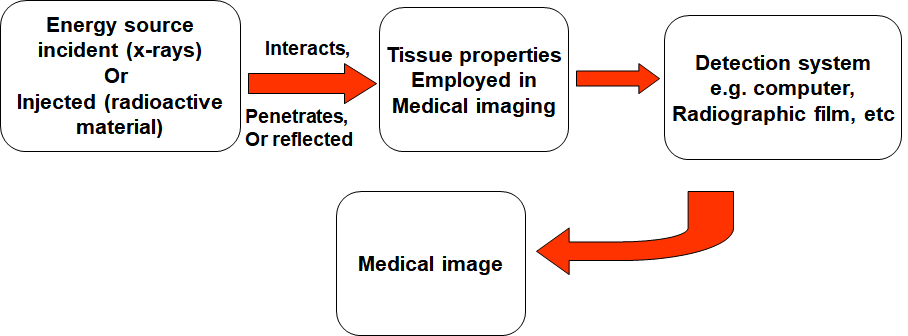

DIP in Medicine

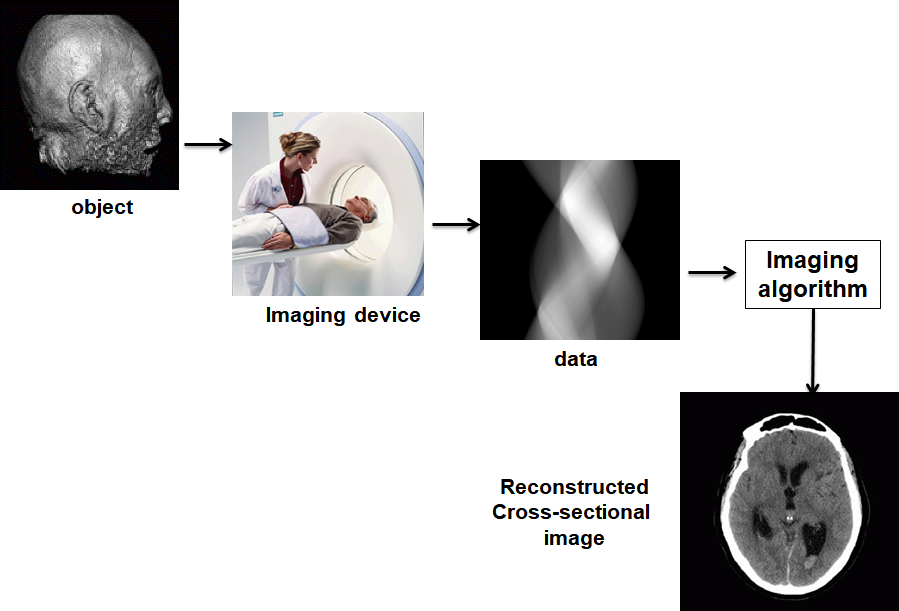

Medical imaging is the technique and process of creating visual representations of the interior of a body for clinical analysis and medical intervention, as well as visual representation of the function of some organs or tissues.

For example, we can take the MRI scan of canine heart and find boundaries between different types of tissues. We can use images with gray levels which represent tissue density and use a suitable filter to highlight the edges.

OVERALL CONCEPT

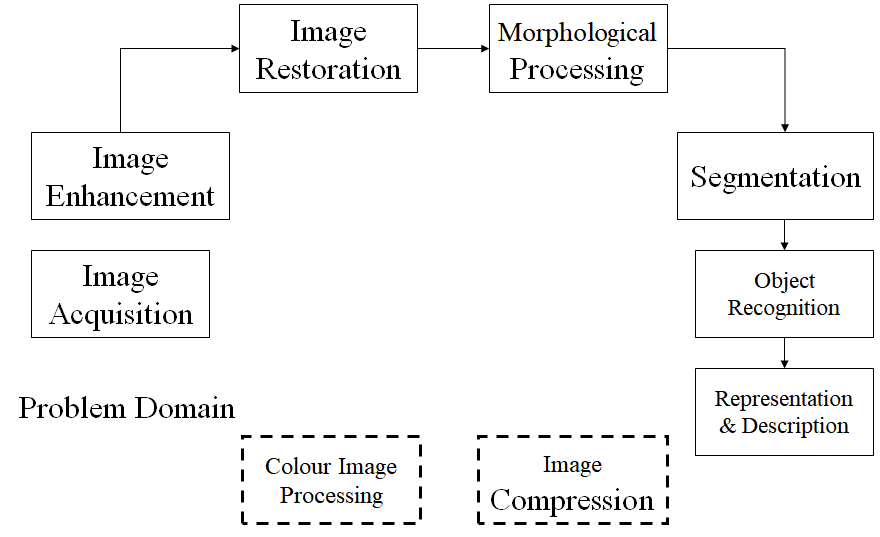

Key Stages in DIP

Let us understand these stages one by one.

- Image Acquisition: An image is captured by a sensor such as a monochrome or camera and digitized. If the output of the sensor is not in digital form, it is digitized with an analog to digital convertor. A camera contains two parts: a lens which collects appropriate radiation and forms a real image of the object and a semiconductor diode which converts the irradiance of an image into an electrical signal.A frame grabber requires circuits to digitize electrical signals from imaging sensor to a computer’s memory.

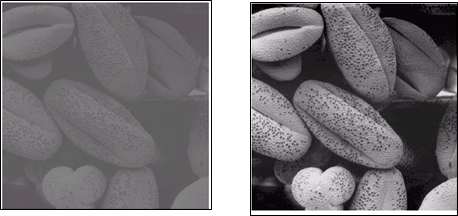

- Image Enhancement: It is used to bring out obscured details or highlight the features of interest of an image. It is commonly used to improve quality and remove noise from images.

- Image Restoration: It is the operation of taking a corrupt/noisy image and estimating the clean, original image. Corruption may come in many forms such as motion blur, noise and camera mis-focus.

- Morphological Processing: Morphological operations apply a structuring element to an input image, creating an output image of the same size. The value of each pixel in the output image is based on a comparison of the corresponding pixel in the input image with its neighbors.

- Segmentation: It is the process of partitioning a digital image into multiple segments to simplify and change the representation of an image into something that is more meaningful and easier to analyze.

- Object Recognition: Object recognition is a technique for identifying objects in digital images. It is the key output of deep learning and machine learning algorithms.

- Description and Representation: After an image is segmented into regions; the resulting aggregate of segmented pixels is represented & described for further computer processing. Representing region involves two choices: in terms of its external characteristics (boundary) in terms of its internal characteristics (pixels comprising the region).

- Image Compression: It is applied to digital images to reduce their cost for storage or transmission.

- Colour Image Processing: A digital color image is a digital image that includes color information for each pixel. The characteristics of color image are distinguished by its brightness and saturation.

- Knowledge Base: Knowledge about a problem domain is coded into an image processing system in the form of a knowledge database.

Types of Digital Images

- Intensity image or monochrome image: Each pixel corresponds to light intensity normally represented in gray scale.

- Color image or RGB image: Each pixel contains a vector representing red, green and blue components.

- Binary image or black and white image: Each pixel contains one bit, 1 represents white and 0 represents black.

- Index image: Each pixel contains index number pointing to a color in a color table.

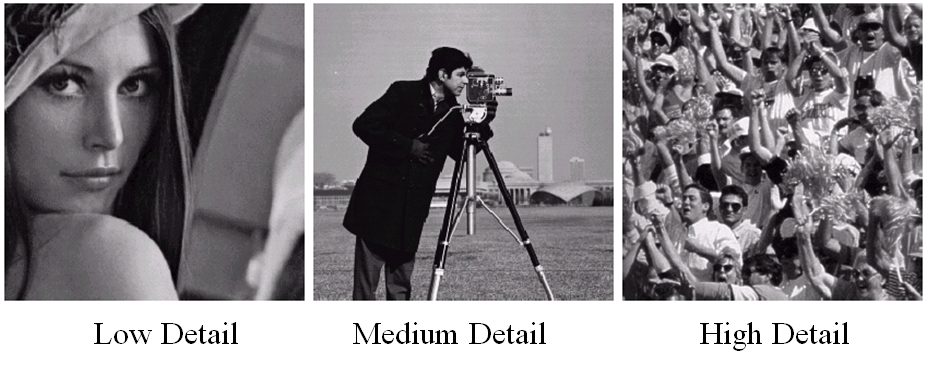

Image Resolution

Resolution refers to the number of pixels in an image. The amount of resolution required depends on the amount of details we are interested in. We will now take a look at Image and Intensity Resolution of a digital image.

Spatial resolution: It is a measure of the smallest discernible detail in an image. Vision specialists state it with dots (pixels) per unit distance, graphic designers state it with dots per inch (dpi).

Intensity Level Resolution: It refers to the number of intensity levels used to represent the image. The more intensity levels used, the finer the level of detail discernable in an image. Intensity level resolution is usually given in terms of the number of bits used to store each intensity level.

Computer Vision: Some Applications

- Optical character recognition (OCR)

- Face Detection

- Smile Detection

- Vision based biometrics

- Login without password using fingerprint scanners and face recognition systems

- Object recognition in mobiles

- Sports

- Smart Cars

- Panoramic Mosaics

- Vision in space

Hope you got some insights into digital image processing and computer vision. Thanks for reading !